Is Your Client Data Training the Competition?

We’ve all been there: you have a mountain of messy data, a complex legal brief to summarize, or a sensitive client email that needs some “polishing.” It’s tempting to paste it into the nearest (free) AI assistant and let the magic happen.

But here is the cold, hard truth: If the product is free, your data is the payment.

When you use the free versions of popular AI tools, you aren’t just a user; you’re a contributor. The information you feed these models often becomes part of their global training set. This is what we call “The AI Leak,” and it’s one of the biggest sleeper risks to proprietary data today.

The Problem: Your Secrets are Now “Public Knowledge”

Most free AI platforms operate on an “opt-in by default” model for data training. This means that any sensitive client information, trade secrets, or proprietary code you input can be used to “improve” the model.

Why this matters:

- Prompt Leakage: In theory, your confidential strategy could influence an answer the AI gives to a competitor three months from now.

- Shadow IT: Your employees might be using these tools without realizing they are bypassing your company’s security protocols.

- Compliance Violations: If you’re handling PII (Personally Identifiable Information) or are under an NDA, pasting that data into a free AI tool could put you in legal hot water.

The Solution: The “Tonight” Checklist

You don’t have to quit AI to stay safe, but you do need to be intentional. Protecting your proprietary data starts with two simple moves:

1. Toggle “Off” the Training

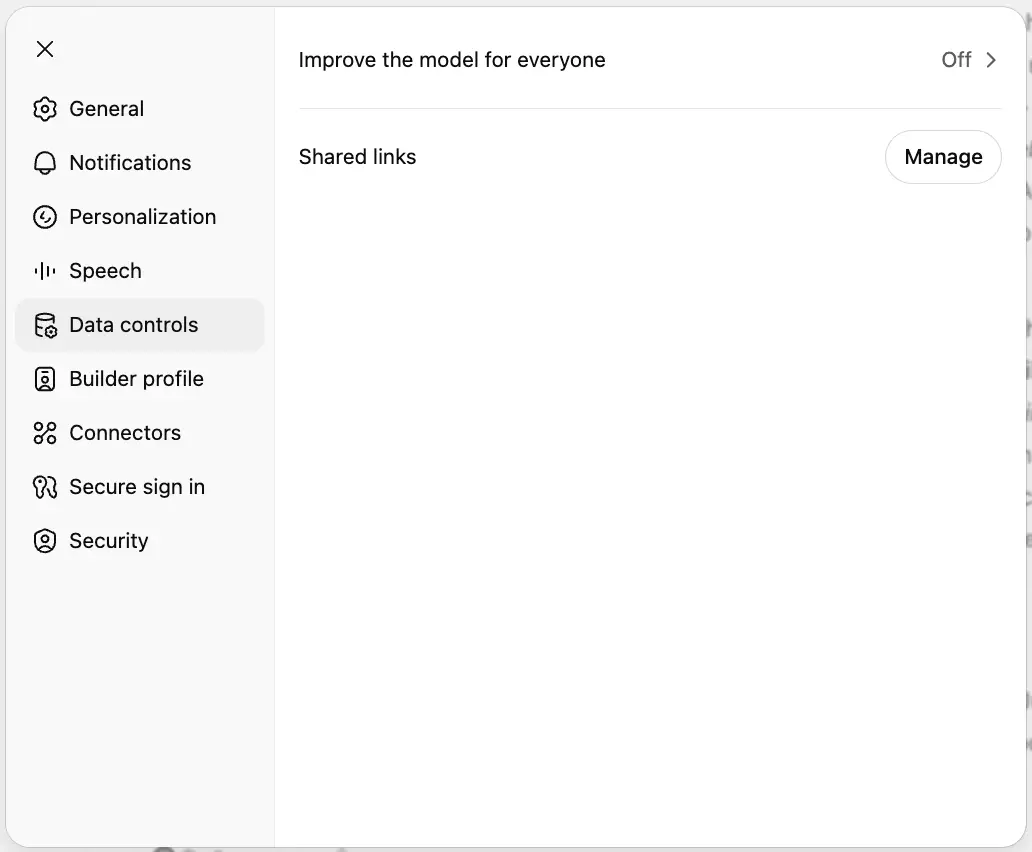

Take ten minutes tonight to audit your accounts. Most major AI players (OpenAI, Google, Anthropic) have a way to opt-out, though they don’t always make the button big and shiny.

- ChatGPT: Go to Settings > Data Controls and toggle off “Improve the model for everyone.”

- Google Gemini: Look for “Gemini Apps Activity” in your settings and turn it off.

- Claude: Anthropic generally does not use consumer data for training by default, but it’s worth checking your “Privacy” settings to confirm your specific plan’s terms.

2. Consider the Upgrade

If your business relies on AI, the “free” price tag isn’t worth the risk. Paid “Team” or “Enterprise” tiers (like ChatGPT Team or Microsoft 365 Copilot) offer Data Processing Agreements (DPAs). These are legal guarantees that your data stays within your organization and is never used to train the global model.+1

The Bottom Line

AI is the most powerful assistant you’ve ever had, but it’s also the most talkative. Before you hit “Enter” on your next prompt, ask yourself: “Would I be okay with this appearing in a competitor’s search results?”

If the answer is no, check your settings tonight. Don’t let your proprietary brilliance become part of the public domain.

Pro-Tip: Treat AI like a public forum. If you wouldn’t post it on LinkedIn, don’t paste it into a free chatbot.